Discover the key players that are laying the groundwork for the metaverse’s future, and the innovations and aesthetics they are known for, from Roblox’s ‘low poly’ visuals to the hyper-realistic avatars of Wolf Digital World.

Executive summary

The metaverse is still very much in it’s infancy – in fact, it is not yet a recognised word in the Merriam-Webster dictionary – but many early developments are setting the stage for this alternate digital realm, which will continue to evolve as the technology that fuels it improves over time.

Analysis

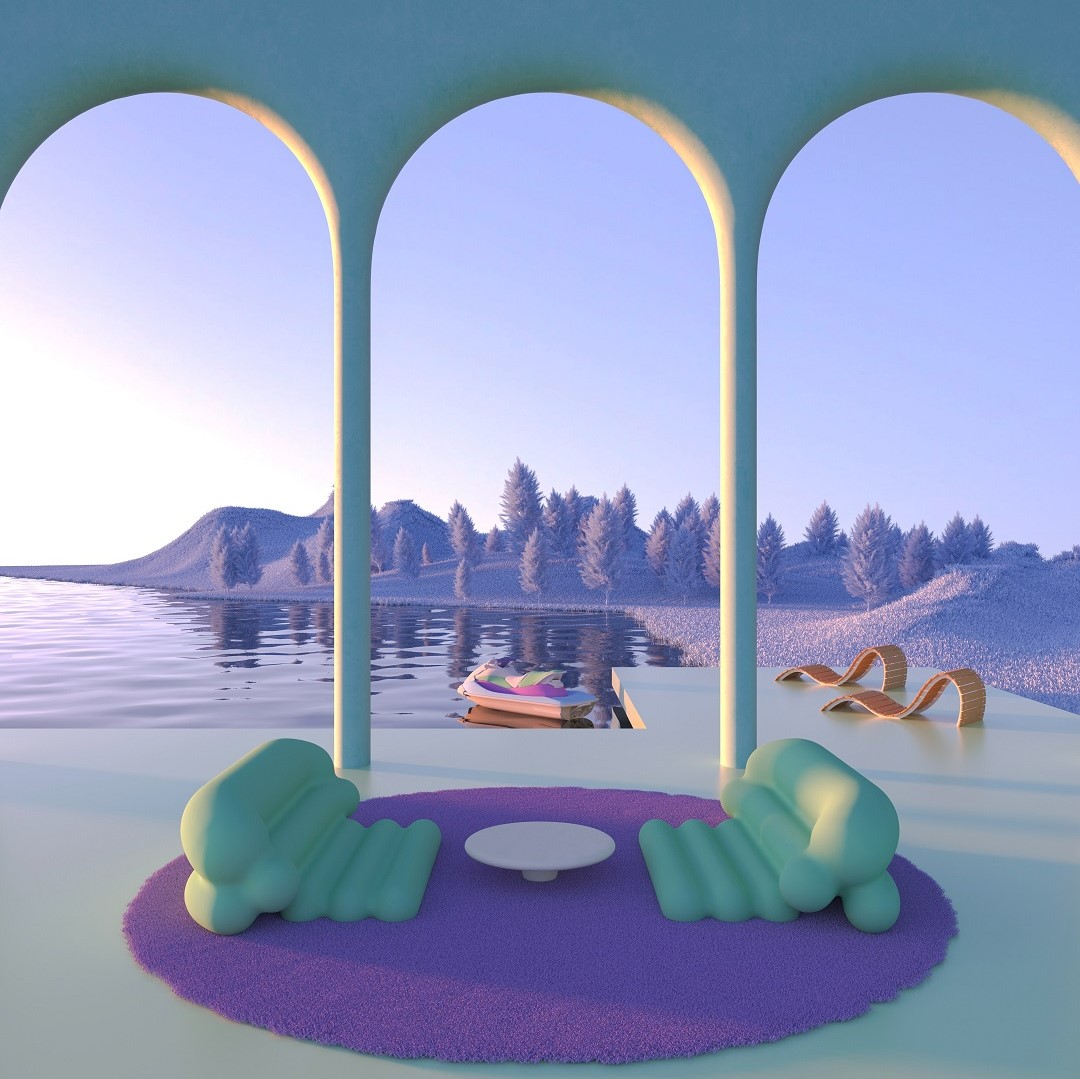

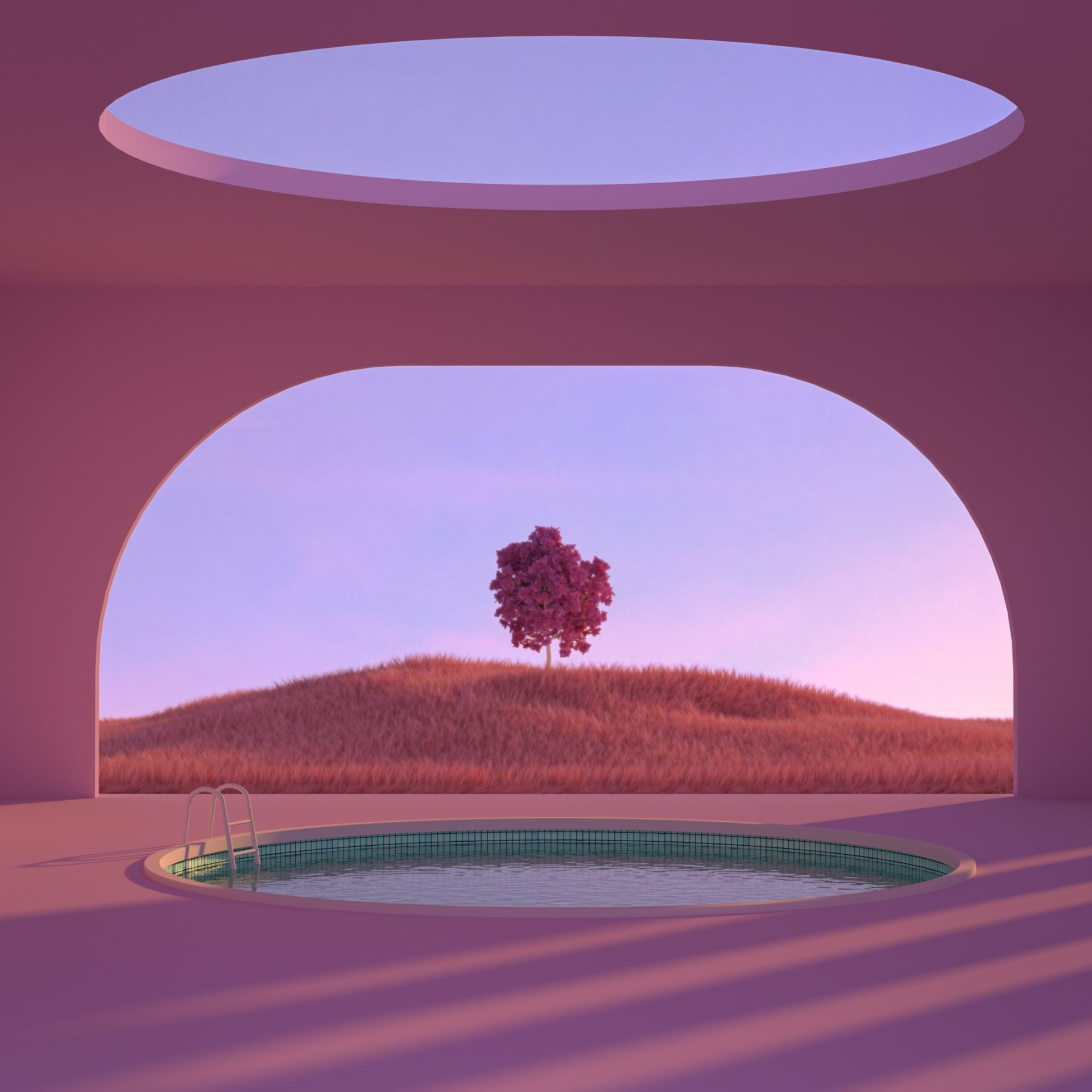

In the future, the digital and physical worlds will blend together, moving from computer and phone screens to an omnipresent digital world. There is more creative freedom in the digital realm, even though the current tech that drives the metaverse has some limitations to its aesthetics.

As technology evolves and adapts to the power needed for high-fidelity, realistic renderings, a wide range of aesthetics will emerge, pairing real-life elements with an imaginative digital twist.

The digital world has no bounds and is fuelling the creativity expressed by digital artists and consumers, who can now customise their own avatars, digital environments and NFTs in ways that fit their personalities and moods. Newer technologies have more powerful rendering engines: NVIDIA’s Omniverse requires a NVIDIA RTX GPU with support for DirectX Raytracing and NVIDIA’s Vulkan Ray Tracing extensions. Technologies like NVIDIA Omniverse and Epic Games’ Unreal Engine are helping to bring more realism to avatars and environments in the metaverse.

What does this mean for you?

It is important to know what your technology is capable of creating, and if necessary, to partner with experienced 3D designers who are familiar with the complex technology being used, in order to achieve optimal results.

This report explores some of the major players shaping the metaverse, their key strategies, and the aesthetics associated with them.

#Roblox

The mega-popular online gaming platform Roblox, together with its game creation system Roblox Studio, has been used by children and people of all ages since 2006. Roblox Studio is a free and immersive creation engine that uses smartphones, tablets, desktops, consoles, and virtual reality devices to create content. It is a proprietary engine of Roblox and uses Luau, a modified version of Lua, the lightweight programming language. Its low-poly graphics are optimised for the web and don’t have high processing power requirements.

✔︎How you can action this: an accessible way to test low-poly aesthetics on one of the most used metaversal experiences available.

#Meta

Meta states it is moving beyond 2D screens and into immersive experiences like virtual and augmented reality, helping create the next evolution of social technology. Crayta, a collaborative game creation platform built on Unreal Engine 4, was acquired by Meta in June 2021. This cloud streaming platform invites users of all different skill levels to create and play user-generated games on mobile or desktop devices. This means the graphics generated will be achievable with the lowest system requirements possible. Meta is setting the stage of the metaverse on a mass-market level. The imagery within this version of the metaverse will naturally have limitations due to the lower computing power requirements, but it will still be engaging to younger, less demanding consumers.

✔︎How you can action this: be cognisant of how aesthetics develop in Meta’s metaverse. As computing power increases for the masses, the aesthetics will improve, so be ready to adjust accordingly.

#Apple

Apple is expected to launch its long anticipated VR headset and AR glasses sometime in 2023 or 2024. Regardless of the hardware coming to fruition, the company has paved the way for a rich metaverse experience with current hardware enhancements as well as 3D software innovations.

Apple has developed its own software, including Object Capture, which is able to turn photos from an iPhone or iPad into high‑quality 3D models that are optimised for AR. This is part of Apple’s RealityKit framework, which was built specifically for augmented reality with photo-realistic rendering capability.

Taking this a step further, newer iPhones and iPads are equipped with depth-sensing LiDAR technology which allows for laser-guided measurements in physical space – tech that AR headsets and self-driving cars already use. This allows users to easily build augmented reality environments along with Apple’s ARKit, a suite of AR creation tools that optimises 3D models for iOS devices. On top of that, Apple’s Metal rendering engine is capable of delivering realistic and immersive real-time 3D graphics.

✔︎How you can action this: Apple is set to provide an extensive hardware and software ecosystem that will support high quality 3D graphics in the metaverse for years to come. Stay tuned!

#Wolf3D

Wolf3D is the creator of the cross-game avatar platform Ready Player Me, which enables users to create an avatar that they can use across many virtual experiences. Its software gives developers an easy-to-use tool for building avatar-based experiences.

Founded in 2014 and based in Estonia, Wolf3D’s first product was a hardware-based 3D scanner. The company scanned more than 20,000 people and collected a large database of high-quality face scans. This proprietary database allowed it to build a deep-learning solution that creates virtual avatars from a single selfie on any device, which can then be used in different gaming and virtual applications. Wolf3D works with RTFKT, Tencent, Huawei, HTC, Vodafone and TCL, among many other tech companies.

Since the avatars are designed to be used across a wide variety of games and virtual apps, they have a low-poly aesthetic. This allows a game to be played by a larger audience, as users won’t need high-end computers to run it.

✔︎How you can action this: explore integrating Wolf3D avatars into games or virtual experiences that are targeted towards a wide variety of users and can be used in multiple apps and games.

#RTFKT

Nike is rapidly expanding its digital presence in the metaverse, starting with its acquisition of RTFKT Studios, a digital fashion and accessories design studio. RTFKT’s futuristic designs, fresh colour combinations and colour effects have captured the world’s attention. In the announcement, Nike’s CEO said this acquisition will be used to serve “athletes and creators at the intersection of sport, creativity, gaming and culture”.

RTKFT uses emerging technologies such as game engines, blockchain validation and augmented reality to create immersive virtual products, which will soon be available as physical ones. The brand has created items from digital sneakers and garments to collectible avatars. These items exist on the blockchain as NFTs and are planned for use in gaming and augmented reality, across different online environments.

RTKFT’s style is engaging, with bold graphical elements, vivid colours with gradation effects and shiny metallic accents on stylised forms. Innovative use of forward-thinking colours and materials is what sets Nike apart from its competitors, so the union with RTKFT makes complete sense.

✔︎How you can action this: RTFKT leads the digital asset creation world. They represent an amazing use cases in terms of powerful partnership but also creativity or monetisation.

#Daz3D

Founded in 2000, Daz 3D is a 3D modelling software and content company specialising in morphable, posable human models and NFT creations. Used by top brands and illustrators, Daz Studio gives amateurs and professional graphic artists a free, time-saving tool to customise their creations.Daz 3D is used in film, TV, animation, video games, web design, print illustrations and more. The US-based company developed the 3D utility for the RTFKT (now Nike) Clone X NFT collection, Champion, Coca-Cola, and Warner Bros’ Batman, among others. Daz 3D’s modelling software, Daz Studio, is free, and has been used to create multiple sell-out collections, including Daz 3D’s Non-Fungible People (NFP).

Its 3D marketplace has over five million inter-compatible assets for Daz Studio and other 3D applications. The company offers hobbyists and professionals the tools they need to create high-quality 3D renders and animations, featuring customisable content that can be exported anywhere, all with a user-friendly interface. This easy-to-use software and extensive material and texture library makes realistic material renders possible.

Daz 3D is a versatile suite equipped with rich features that enable customisation of scenes and 3D objects, thanks to easy-to-use advanced tools. It provides both a figure platform and character engine that allows the production of detailed characters and animated objects.

✔︎How you can action this: utilise this library of assets to create a versatile range of CMF designs that will resonate with a wide variety of consumers.

#Spatial

Spatial’s mission is to build a global community where people can create and share, discover the world around them, and connect with others across the globe. The US-based company has set standards for 3D model types and textures that can be imported into its platform.

Spatial was founded in 2016, with roots in workplace collaboration, and is rich in features for a metaverse platform. The company is a venture-backed VR and web platform for creating one-click NFT galleries and exhibitions. Content to build a world can be uploaded easily and is as simple as dragging and dropping. Users can upload videos, 3D models or a selfie to create a digital twin avatar.

Spatial offers several ways to import content on to its platform. It can be uploaded directly to Spatial via the web app, via Spatial’s integrations with MetaMask, Google Drive or OneDrive, or by generating a 3D model by scanning a room or object using the LiDAR scanner on newer iOS devices.

Spatial does not support animated textures such as moving water, but these can be replicated by using transparent textures with its specified settings. The reason these textures are supported in pre-set Spatial environments such as the home lobby is due to Unity shading. The company hopes to enable Unity plugins for Custom Environments in the future.

✔︎How you can action this: A way to become part of the metaverse community and easily build 3D environments and objects with Spatial rich set of tools.

#NVIDIA Omniverse

The NVIDIA Omniverse is a true-to-reality simulation and collaboration platform that is delivering the foundation of the metaverse through integrations with popular 3D rendering apps, which will open it to millions more users. The company sees the metaverse as an immersive and connected shared virtual world where artists can create one-of-a-kind digital scenes, architects can create beautiful buildings, and product designers can design new products for consumers.

In order to create a convincing metaverse experience, it needs an engine to provide realistic physics, realistic graphics, and AI avatars. NVIDIA GPUs play an important role in simulating all three. Its innovative tech, such as real-time ray-tracing, has made the company unique in bringing photo-realistic 3D graphics to the metaverse.

The Omniverse platform includes NVIDIA Studio and other apps that provide aspiring artists and industry professionals with the tools they need to create optimised 3D content. NVIDIA’s industry-leading GPUs, paired with its exclusive driver technology, work with popular 3D rendering apps like KeyShot, Cinema 4D, Adobe Substance and Blender.

✔︎How you can action this: leverage cutting-edge technologies from NVIDIA to deliver inspiring levels of creative performance.

#Unity 3D

Unity is a leading game development engine used to create and operate interactive, real-time 3D (RT3D) content. Part of Unity’s substantial presence is its free 3D creation tools, available for everyone. Its goal is to bring the magic of film assets to the individual creator.

Unity has become a staple engine for mobile developers, and it is now deeply integrated within Apple iOS. Building games for devices like the iPhone and iPad requires a different approach than for desktop PC games. Unlike PC hardware, mobile hardware is standardised and not as fast or powerful as a computer with a dedicated video card.

Unity has built an ecosystem that optimises graphics and processing power so that PC games can be played from anywhere, across any device. This puts Unity in a good position to dominate the metaverse and give it more realistic, high-definition 3D graphics. Unity is democratising the tools and software needed to build and enjoy interactive 3D experiences. With Unity Pro, real-time 3D, AR and VR content can be deployed on HoloLens and Oculus. “We feel strongly that real-time 3D meets the internet is the metaverse,” says Julie Shumaker, Senior Vice President of Revenue at Unity.

Unity powers one of the metaverse pioneers, Decentraland, which has partnered with major brands like Samsung, Dolce & Gabbana, Tommy Hilfiger and Vollebak. Unity brings more details like shadows to Decentraland, which encourages a low-poly aesthetic so that its digital world can be accessible on a wide variety of devices.

✔︎How you can action this: reach a wide range of people who use a variety of devices with this powerful engine. Take advantage of powerful Apple technologies optimised for Unity-based, interactive 3D experiences.

#Unreal Engine

Unreal Engine is a 3D computer graphics game engine, developed by Epic Games, which enables game developers and creators to realise high-fidelity, real-time 3D content. It is known for its unrivalled visuals, toolset and performance.

Unreal Engine is free with a royalty-based payment system for those who use the engine to sell their games. The Unreal game engine was essentially created/coded by Epic Games founder Tim Sweeney for the first-person shooter Unreal, which was released in 1998. The engine’s code has been extended and improved through many generations of games. Over time, games made with the engine became increasingly popular, as did the popularity of the game engine for professional game creators.

The company’s developments include MetaHuman, a database of high-fidelity digital humans that are easily created with its free cloud-based app, where users can adjust and sculpt the features to be like their own. In addition to this tool, it has a library of Megascans that provide over 16,000 3D object, material and texture assets with photo-realistic features.

Unreal Engine provides high-quality, real-time rendering that can be used by many different industries. To get the most out of this engine, a powerful GPU and high-end graphics card is recommended. The recently released Unreal Engine 5 will also support mobile hardware as well as Android and iOS with minimal changes.

✔︎How you can action this: trust this established game engine to deliver high-quality results, relying on a team of developers who are adept at using it.

How to action this:

Choose an attainable style

Determine the technology that’s right for you and your objectives, and build digital creations based on the aesthetics that the software and hardware package is capable of generating.

Mix digital and physical details

Materials that are digitally created have the most impact when they have familiar details from the physical world, which are amplified with fantastical elements that can only exist digitally.

Partner with experts

Higher-fidelity graphics require specialised skill sets which companies should seek, along with the proper hardware and software, and material library capable of delivering impactful graphics.

Invest in the future

Keep an eye on software and hardware developments in this space as technology is rapidly evolving. Be ready to invest in software, equipment, services and talent that can deliver futuristic, high-fidelity experiences.

Article powered by WGSN.com

Co-created by

-

WGSN

WGSN

WGSN Is The World's Leading Consumer Trend Forecaster. Our Accurate Forecasts Provide Global Trend Insights, Expertly Curated Data And Industry Expertise To Help Our Clients Understand Consumer Behaviour And Lifestyles, Create Products With Confidence And Trade At The Right Time.

Share

Discussion

Index

Index

Archives

Recommend

Recommend

Recommend

Recommend

Recommend

-

{ Prototype }

GEMINI Laboratory GLOBAL DESIGN AWARDS WINNERS ANNOUNCEMENT

GEMINI Laboratory GLOBAL DESIGN AWARDS WINNERS ANNOUNCEMENT

GEMINI Laboratory GLOBAL DESIGN AWARDS WINNERS ANNOUNCEMENT

-

{ Community }

Nordic Design Conference “Design Matters”: How Digital Design Connects with Society?

Nordic Design Conference “Design Matters”: How Digital Design Connects with Society?

Nordic Design Conference “Design Matters”: How Digital Design Connects with Society?

-

{ Special }

Technology for the imagination age

Technology for the imagination age

Technology for the imagination age

-

{ Community }

GEMINI Laboratory Launches the “AltField” Material Library for Multi-layered Worlds

GEMINI Laboratory Launches the “AltField” Material Library for Multi-layered Worlds

GEMINI Laboratory Launches the “AltField” Material Library for Multi-layered Worlds

-

{ Prototype }

A Dystopia In A World Freed From Death——Misato “GEMINI EXHIBITION: Debug Scene” Artist Interview 04

A Dystopia In A World Freed From Death——Misato “GEMINI EXHIBITION: Debug Scene” Artist Interview 04

A Dystopia In A World Freed From Death——Misato “GEMINI EXHIBITION: Debug Scene” Artist Interview 04

Special

Special

Special

Special

Special

Featured articles spun from unique perspectives.

What Is

“mirror world”...

What Is

“mirror world”...

What Is

“mirror world”...

What Is

“mirror world”...

What Is

“mirror world”...

“mirror world”... What Is

“mirror world”... What Is

“mirror world”... What Is

“mirror world”... What Is

“mirror world”...

Go Down

Go Down

Go Down

Go Down

Go Down

The Rabbit

The Rabbit

The Rabbit

The Rabbit

The Rabbit

Hole!

Hole!

Hole!

Hole!

Hole!

Welcome To Wonderland! Would You Like To Participate In PROJECT GEMINI?